Should You A/B Test Small Changes or Big Changes?

A common question I hear about A/B testing is whether to test small changes or big changes. My answer, almost always, is ”test big changes.”

There’s an immediate follow-up question: “But if I test a big change and it wins/loses, how will I know exactly what made it win/lose?”

Who cares what made it win?! Take pleasure from the improvement or learn from the loss, and move on to the next experiment!

If the single upside of making a refinement—testing one small change at a time—is knowing precisely what led to the result, then it is nowhere near making up for the downsides:

- Small changes usually lead to small outcomes. You may read about or experience a small test that made a disproportionately big impact, but those are very rare. (The fact that they are rare is why people write about them. Nobody writes about the small tests that led to a 0.5% increase in conversion rates... Which is a problem.)

- Regardless of whether a small change has a positive or negative outcome, the test will need to run for a longer period of time before the difference is noticeable and statistically significant.

- There is a significant cost of opportunity. While you're running a test with a small word change or a different button color, which may or may not result in a slight improvement, you are definitely not running tests that could result in big, double-digit improvements.

Testing small changes takes more time and has less of an impact. Testing big changes, on the other hand, results in bigger outcomes and takes less time to reach a conclusive answer. Even if the outcome is negative, you will know about it much sooner, meaning you can learn from it faster and move on to the next test.

Test big changes.

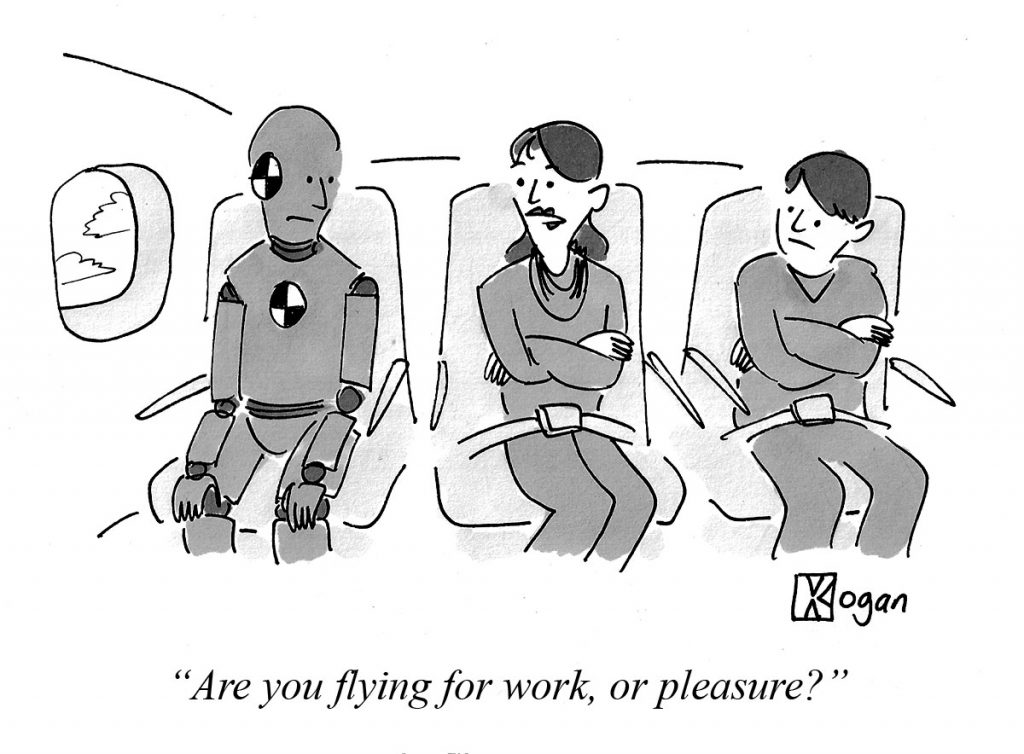

And whatever you do, don’t worry about button colors.

◼

PS - Liked this article? I write one every month or so, covering lessons learned on B2B startup growth. Don't miss the next one:

If you need help with marketing and revenue growth, get in touch.

Greg Kogan

Greg Kogan